# Handling Errors & Fallback

LLM nodes can fail for various reasons: Context can exceed the model limit. Providers can time out. Rate limits and transient network errors happen.

In a StackAI workflow, we have built in several controls to keep workflows reliable even when LLM nodes error out:

* **Retry on Failure**: try again for transient failures.

* **LLM Fallback Mode**: switch to a backup model/provider.

* **Fallback Branch** (“On Error”): continue down an alternate path.

### When to use Error Handling

Use these settings when:

* Your workflow is user-facing and must respond every time.

* You depend on external providers with occasional instability.

* You have large prompts or context in some runs but not all.

* You run batch jobs and want structured failure outputs.

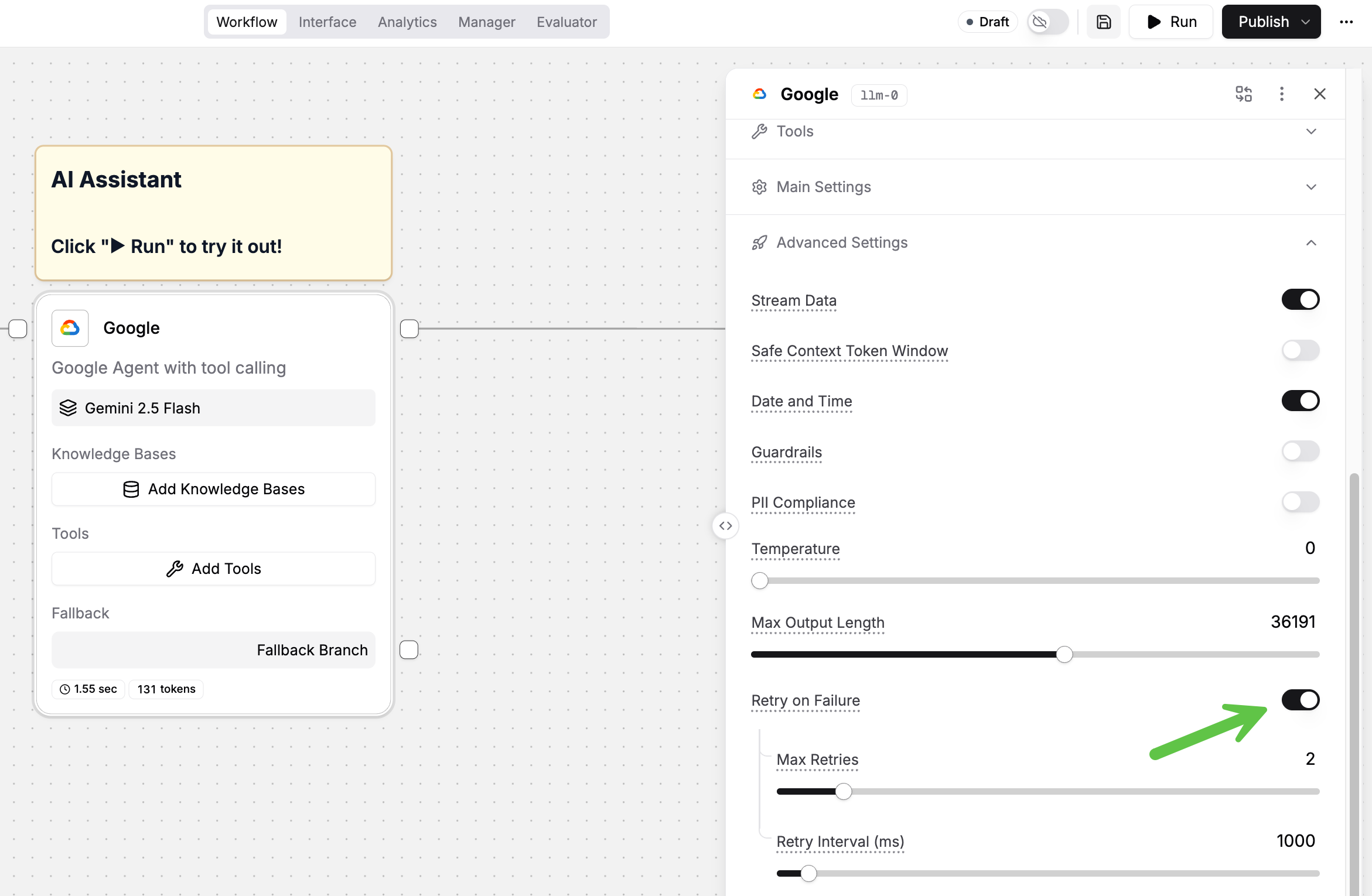

### Retry on Failure

Once you toggle this option on, you can choose the maximum number of retries, as well as retry interval measured in milliseconds.

Turn this on when failures are likely transient. Typical examples:

* Provider timeouts

* 429 rate limits

* Intermittent network issues

{% hint style="warning" %}

Be careful when retrying nodes with side effects. If a downstream action is not idempotent, retries can duplicate work.

{% endhint %}

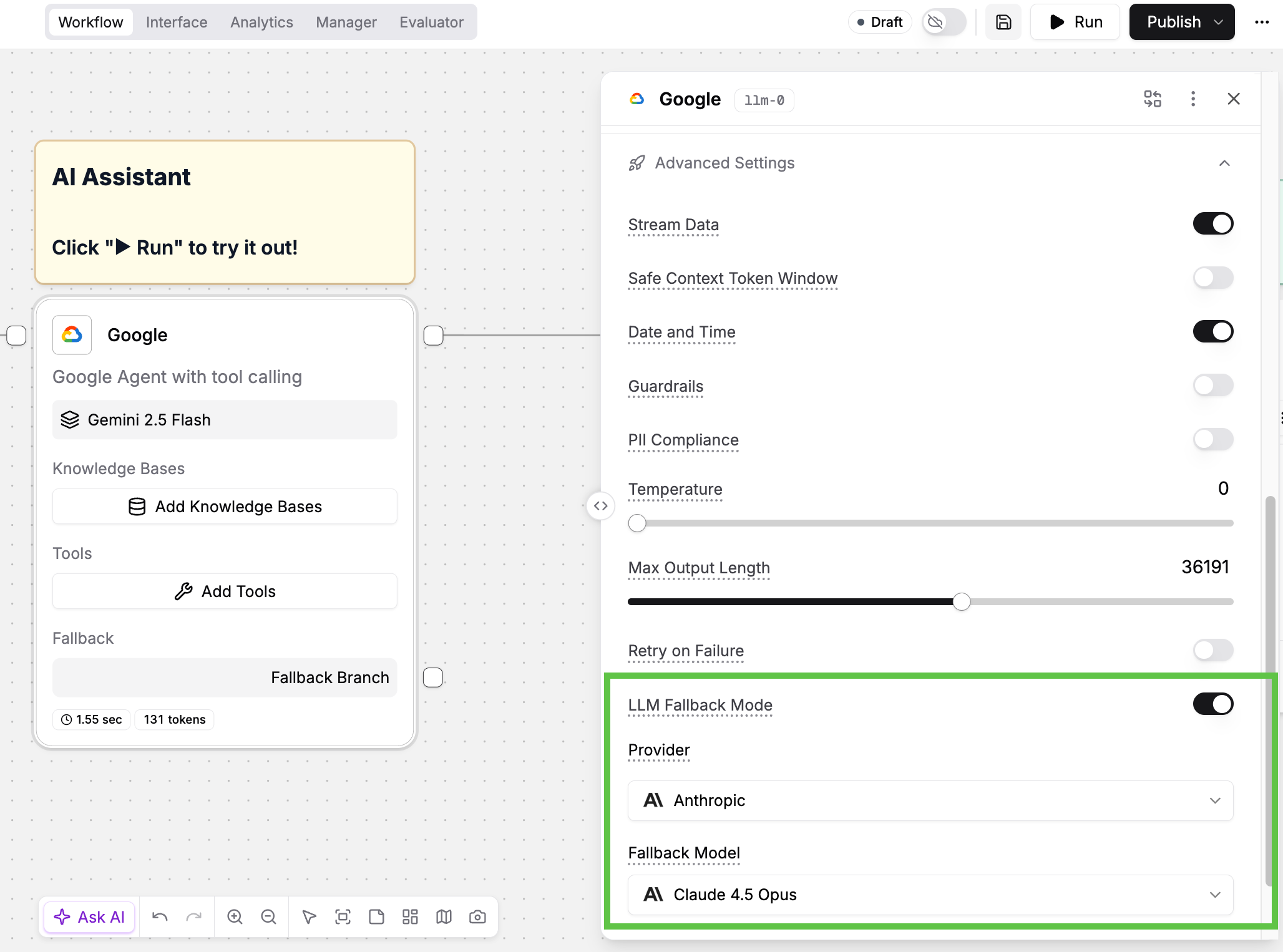

### LLM Fallback Mode

Turn this on to automatically use a backup model if the primary LLM fails.

This helps when:

* a provider is down or degraded

* one model is "flaky" for your workload

* the primary model frequently times out

Practical guidance:

* Pick a backup model that is available in a different provider region.

* Keep output formatting consistent across primary and backup.

* Prefer a “reliable” backup over a “smart” backup for production runs.

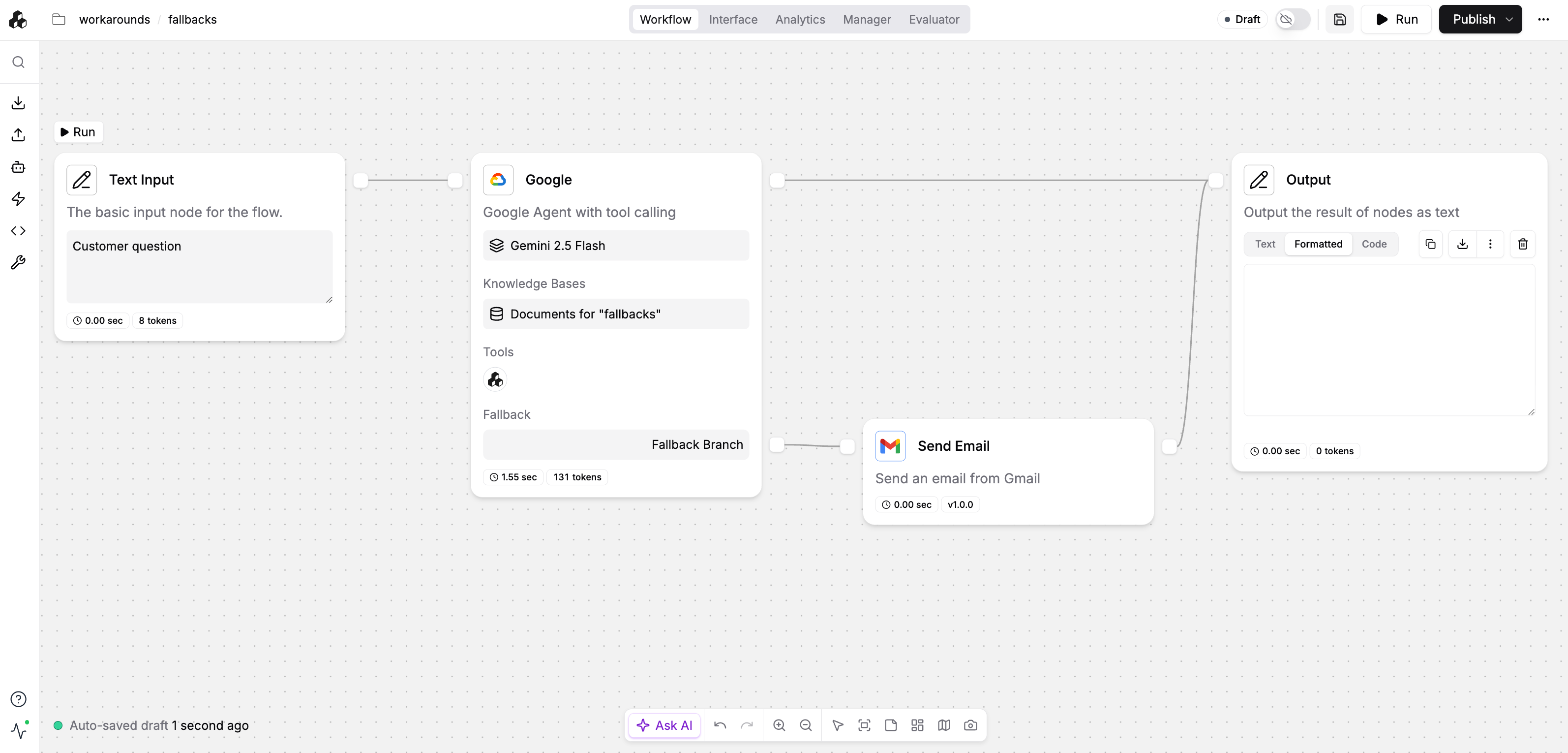

### Fallback Branch (“On Error”)

Turn this on when you want the workflow to continue after a failure. You can choose between:

1. **Stop workflow on error**: fail fast and surface the error.

2. **Fallback Branch**: run alternate nodes when this node fails.

Use a fallback branch to:

* return a safe message to users

* emit a structured error object for downstream systems

* notify a human or open a ticket

* try a simpler approach (shorter prompt, fewer tools, smaller context)

See below for an example where if the LLM node fails, it will automatically send an email to the workflow admin.

### Recommended setup

Consider using this order for most production agents:

{% stepper %}

{% step %}

#### Turn on Retry on Failure

Start with retries to handle transient issues. Keep prompts deterministic if you need repeatable results.

{% endstep %}

{% step %}

#### Configure LLM Fallback Mode

Select a backup model/provider for resiliency. Test that it produces compatible output shape.

{% endstep %}

{% step %}

#### Add a Fallback Branch for the node

Make failures explicit and recoverable. Return a user-safe output or a structured error payload.

{% endstep %}

{% step %}

#### Make the fallback branch lean

In the fallback path:

* shorten instructions

* reduce retrieved context

* avoid tool-heavy chains

{% endstep %}

{% endstepper %}

### Patterns that work well

* **User-facing chat**: fallback branch returns a short apology to user while notifying the admin

* Example: ask the user to retry or re-upload smaller files; in parallel, send a message to the workflow admin notifying them of the error

* **Batch runs**: fallback branch writes an error row and continues the loop.

* **Tool-heavy agents**: fallback branch skips tools and answers from context only.

### Potential use cases

* **Customer support**: fallback to a simpler “triage” response when tools fail and escalate to a human agent.

* **Document extraction**: retry once, then fallback to a smaller-context prompt that only extracts key fields.

* **Compliance bots**: fallback branch returns a standardized response such as “insufficient evidence to make a determination” with citations missing.

* **Web research**: fallback to cached sources or skip web calls when rate is limited.

### See also

* [Advanced Settings](https://docs.stackai.com/workflow-builder/core-nodes/ai-agent-node/advanced-settings)

* [LLM Node](https://docs.stackai.com/workflow-builder/core-nodes/ai-agent-node)

* [How to Improve LLM Performance](https://docs.stackai.com/workflow-builder/core-nodes/ai-agent-node/llm-hosting-and-governance/how-to-improve-llm-performance)